The Oxford English Dictionary recognised ‘google’ as a verb in 2006, and its active form is about to gain another dimension. One of the most persistent anxieties amongst those executing remote warfare, with its extraordinary dependence on (and capacity for) real-time full motion video surveillance as an integral moment of the targeting cycle, has been the ever-present risk of ‘swimming in sensors and drowning in data‘.

But now Kate Conger and Dell Cameron report for Gizmodo on a new collaboration between Google and the Pentagon as part of Project Maven:

Project Maven, a fast-moving Pentagon project also known as the Algorithmic Warfare Cross-Functional Team (AWCFT), was established in April 2017. Maven’s stated mission is to “accelerate DoD’s integration of big data and machine learning.” In total, the Defense Department spent $7.4 billion on artificial intelligence-related areas in 2017, the Wall Street Journal reported.

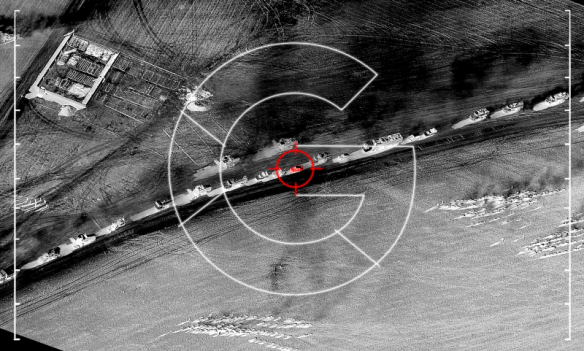

The project’s first assignment was to help the Pentagon efficiently process the deluge of video footage collected daily by its aerial drones—an amount of footage so vast that human analysts can’t keep up, according to Greg Allen, an adjunct fellow at the Center for a New American Security, who co-authored a lengthy July 2017 report on the military’s use of artificial intelligence. Although the Defense Department has poured resources into the development of advanced sensor technology to gather information during drone flights, it has lagged in creating analysis tools to comb through the data.

“Before Maven, nobody in the department had a clue how to properly buy, field, and implement AI,” Allen wrote.

Maven was tasked with using machine learning to identify vehicles and other objects in drone footage, taking that burden off analysts. Maven’s initial goal was to provide the military with advanced computer vision, enabling the automated detection and identification of objects in as many as 38 categories captured by a drone’s full-motion camera, according to the Pentagon. Maven provides the department with the ability to track individuals as they come and go from different locations.

Google has reportedly attempted to allay fears about its involvement:

A Google spokesperson told Gizmodo in a statement that it is providing the Defense Department with TensorFlow APIs, which are used in machine learning applications, to help military analysts detect objects in images. Acknowledging the controversial nature of using machine learning for military purposes, the spokesperson said the company is currently working “to develop polices and safeguards” around its use.

“We have long worked with government agencies to provide technology solutions. This specific project is a pilot with the Department of Defense, to provide open source TensorFlow APIs that can assist in object recognition on unclassified data,” the spokesperson said. “The technology flags images for human review, and is for non-offensive uses only. Military use of machine learning naturally raises valid concerns. We’re actively discussing this important topic internally and with others as we continue to develop policies and safeguards around the development and use of our machine learning technologies.”

As Mehreen Kasana notes, Google has indeed ‘long worked with government agencies’:

A 2017 report in Quartz shed light on the origins of Google and how a significant amount of funding for the company came from the CIA and NSA for mass surveillance purposes. Time and again, Google’s funding raises questions. In 2013, a Guardian report highlighted Google’s acquisition of the robotics company Boston Dynamics, and noted that most of the projects were funded by the Defense Advanced Research Projects Agency (DARPA).