Here is the second installment of my essay on an airstrike on three vehicles in Uruzgan, Afghanistan on 21 February 2010 that has become one of the central examples in critical discussions of remote warfare. The first installment is here.

***

0245

At 0245 on 21 February 2010 three giant black MH-47 Chinook helicopters sent the dust whirling into the darkness as they lifted off from Firebase Tinsley, a remote US military outpost surrounded by thick mud walls, HESCO barriers and barbed wire on a bluff above the Helmand River in north western Uruzgan. [47]

The MH-47s were regularly used to insert and extract US Special Forces; equipped with terrain-following radar, they could fly fast and low, usually at night. On this occasion they were escorted by an AC-130H Spectre gunship (call sign SLASHER03) from the Combined Joint Special Operations Air Detachment at Bagram Air Field, north of Kabul. The helicopters carried a 12-member team from the 3rd Special Forces Group – Operational Detachment Alpha (ODA) 3124 [48] – the JTAC (Joint Terminal Attack Controller) assigned to liaise with the supporting aircraft, their four Afghan interpreters and their light six-wheeled all-terrain vehicles. Also on board were 30 Afghan National Police officers and 20 Afghan National Army soldiers (including Afghan Special Forces).

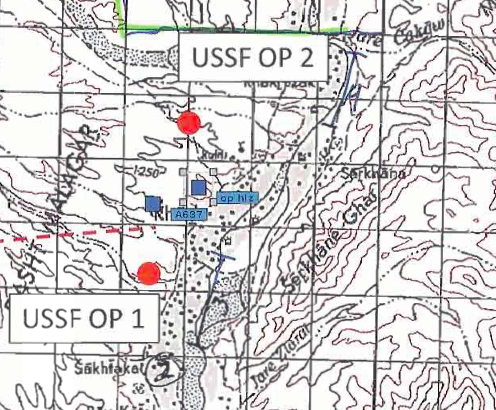

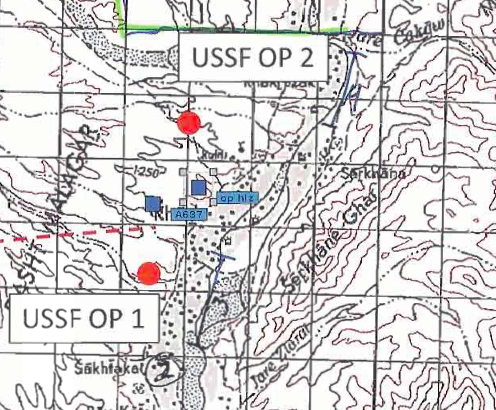

It was a short flight, 10 minutes and 20 km north to a landing zone above the village of Khod in Shahidi Hassas district (Figure 2). This is an arid, mountainous region, but Khod straggles along a sinuous river valley where over many generations the construction of an extensive irrigation system, an intricate maze of clay-lined ditches and channels, has created a fertile green zone. It is none the less a desperately poor area, with high levels of unemployment, devoted largely to herding and subsistence agriculture.

There were some cash crops, however, and Shahidi Hassas was one of three districts in Uruzgan where opium poppy cultivation was concentrated. Between 1997 and 2001, when the Taliban were in power, the cultivation of opium poppies had been forbidden, but production had since increased and was now an important source of revenue for the insurgency. [49]

Even so, the purpose of this joint US-Afghan mission – codenamed Operation Noble Justice – was not poppy eradication. [50] It was a cordon-and-search operation designed to go through the village bazaar, a network of alleys lined with small shops and markets, and the surrounding q’alats or compounds – high-walled residential complexes containing separate homes for close family members – to find a suspected ‘factory’ making Improvised Explosive Devices (IEDs).

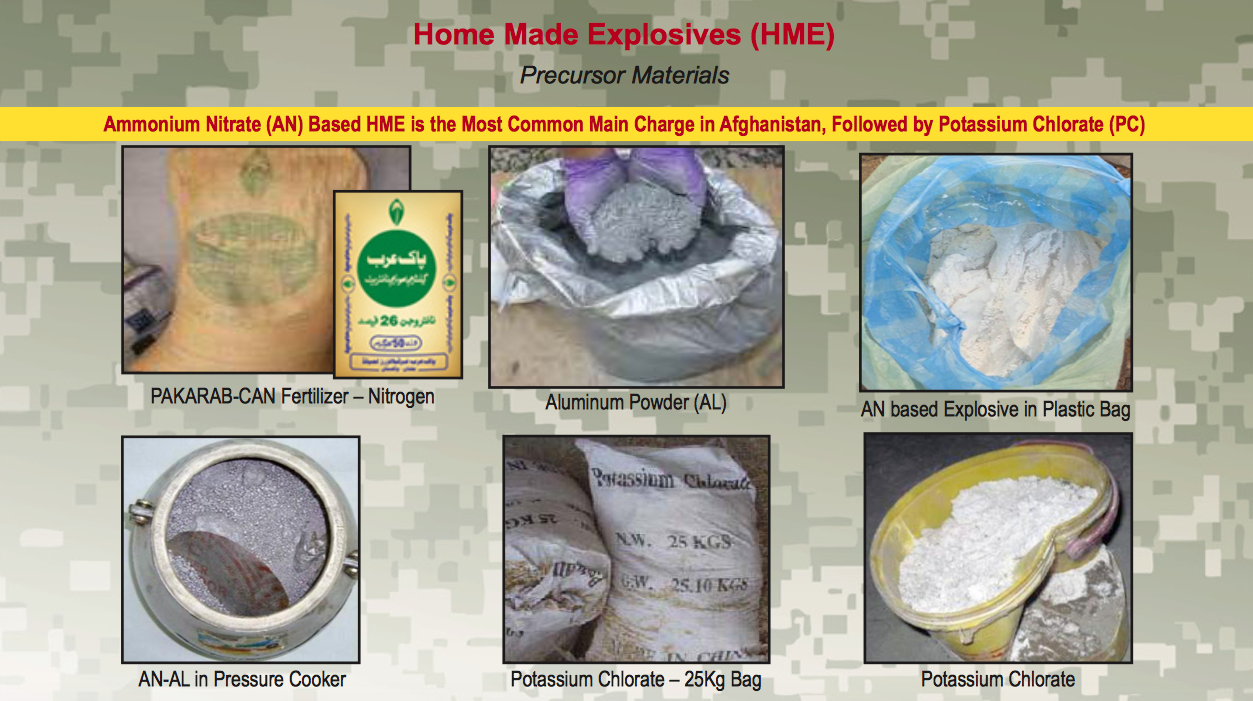

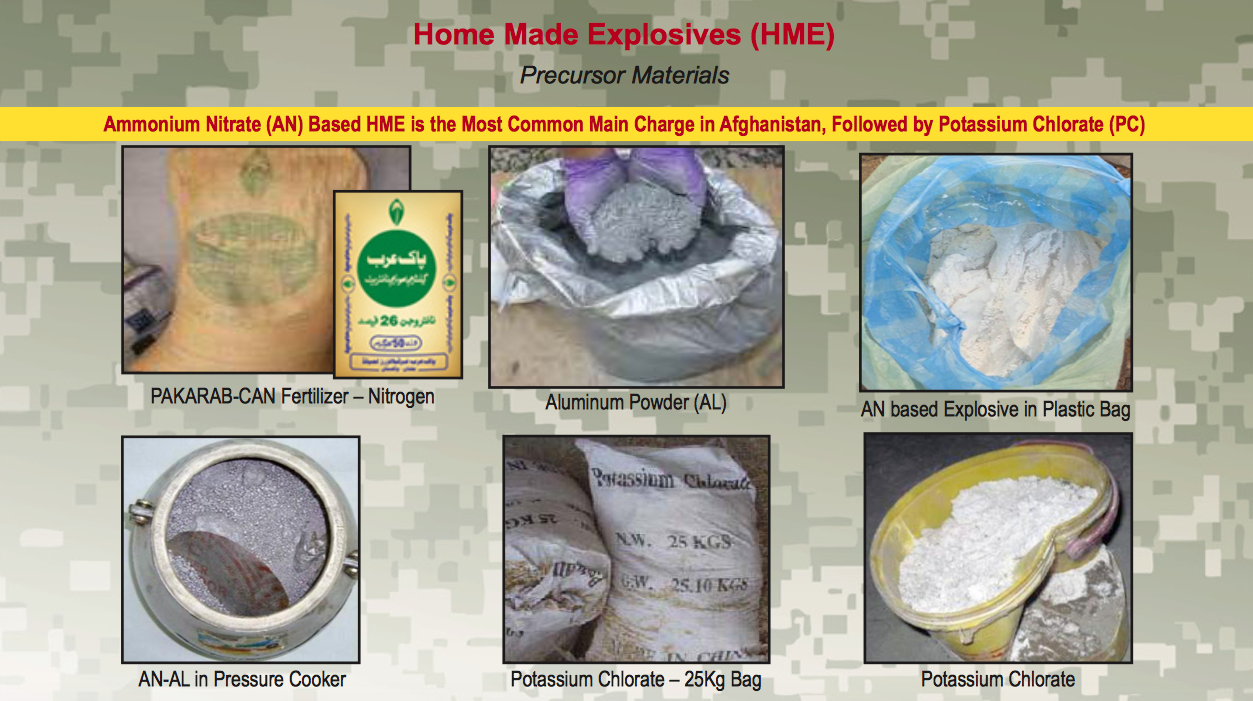

Often described as the Taliban’s weapon of choice, IEDs were – and remain – an overwhelming cause of military and civilian casualties in Afghanistan. Most are roadside devices triggered by command wire, radio signal, mobile phone or pressure plate. As its name suggests, an IED is not any one thing – it is improvised from diverse, cheap components and emerges within extended networks of supply, manufacture and emplacement [51] – and its assembly is not tied to any particular buildings either. The IEDs used by the Taliban typically relied on Calcium Ammonium Nitrate (CAN) fertilizer, made in two commercial plants in Pakistan and smuggled across the highly porous border. [52]

To the unsuspecting eye sacks of fertilizer in an agricultural community might seem innocuous, and in many cases they surely were, but the coalition forces knew the special significance of CAN (which was illegal) and they were accompanied by a trained military dog to seek it out. During their search of the village they eventually seized 100 sacks of CAN together with 1,000 machine-gun rounds, containers of home-made explosives, radios and batteries (p. 1992). [53]

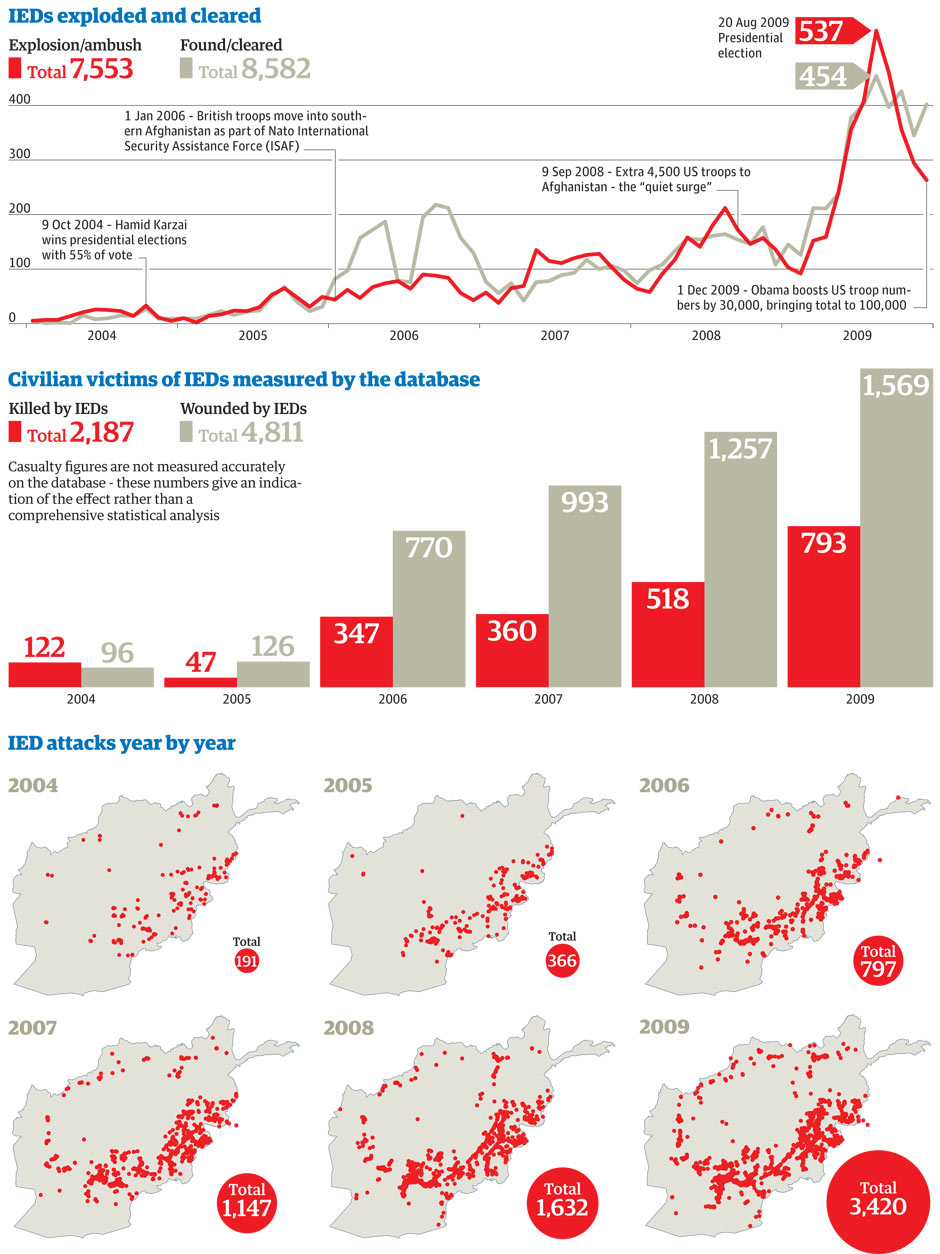

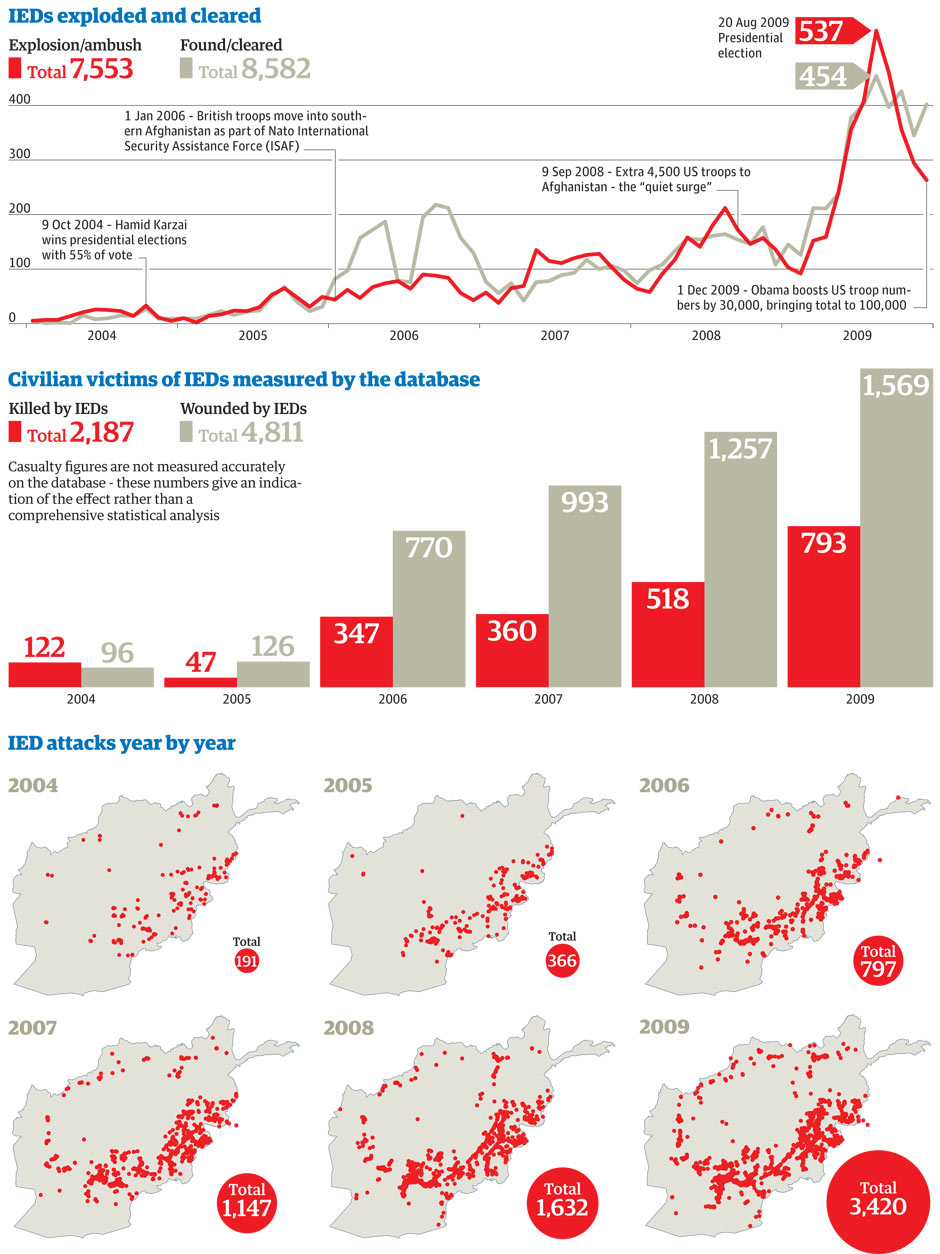

Although Operation Noble Justice was nothing out of the ordinary – it was classified as a level 1 mission, which meant that it posed a ‘medium risk’ to the troops with some ‘potential for political repercussions’ [54] – its importance transcended the local. Effective IED incidents in Afghanistan, excluding explosions that resulted in no casualties and devices that were cleared before they could detonate, had virtually doubled every year since the US-led invasion in 2001: in 2006 there were 127 of them, followed by 206 in 2007, 387 in 2008 and 820 in 2009. [55]

The situation by early 2010 was grim in the extreme, but it was particularly serious in the south. In the previous twelve months 67 per cent of all IED detonations and discoveries took place in the provinces of southern Afghanistan, including Uruzgan whose central regions were desperately insecure (Figure 3).

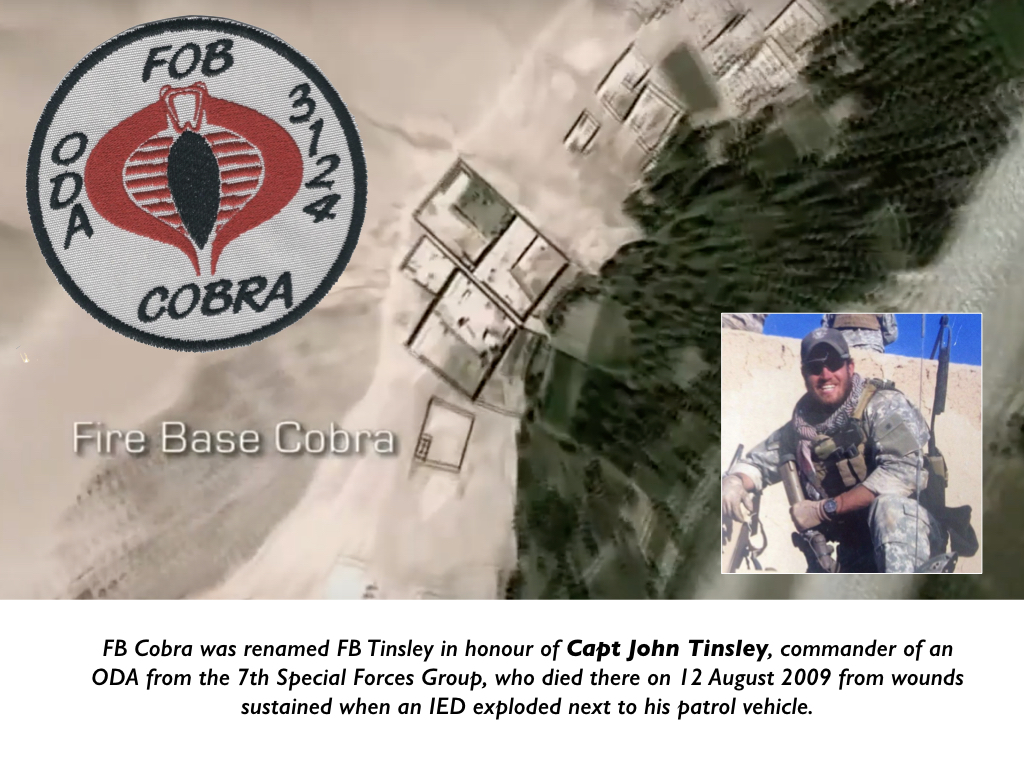

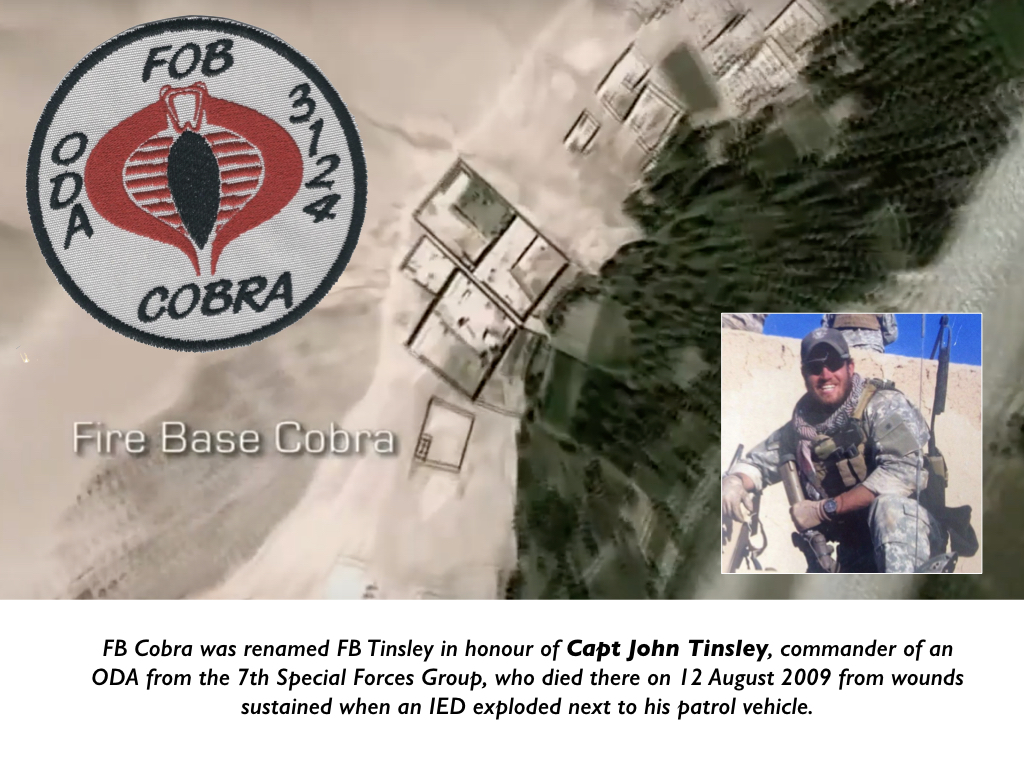

These considerations underlined the tactical importance of the operation at Khod, but ferreting out an IED factory must have had a more direct significance for the Special Forces. Their base had been called Firebase Cobra until it was renamed in honour of Capt John Tinsley, commander of an ODA from the 7thSpecial Forces Group based there, who had died six months earlier from wounds sustained when an IED exploded next to his patrol vehicle. When that ODA went up to Khod on a ten-day mission ‘Captain Tinsley got killed,’ the assistant detachment commander of ODA 3124 said, ‘so we knew it was a bad area’ (p. 1567).

The dangers showed no sign of diminishing, and Lt Col Brian Petit, ODA 3124’s battalion commander at Special Operations Task Force–South at Kandahar Air Field, was under no illusions. [56] He admitted that all his forces could do in their forays from their firebases was ‘prevent the Taliban running the flag up the pole’, and he could not ‘see us winning those places outright’ (p. 1079). Some of the Special Forces missions outside the wire were humanitarian – using their team medics to provide medical and dental care to the civilian population, for example [57] – but their primary purpose was to ‘disrupt, deny and interdict’ the Taliban, and prevent them from launching attacks on the ‘priority areas’ established by ISAF’s Regional Command–South (RC-S) (p. 1079). Yet in doing so SOTF-South was not part of ISAF; it was part of US Forces–Afghanistan and its Operation Enduring Freedom (OEF). [58] In a report that was completed 18 months before the Uruzgan attack Human Rights Watch gave two reasons why their missions were more likely to lead to civilian casualties.

First, Special Forces typically operated in small groups and were relatively lightly armed – as here – and so they ‘often required rapid support in the form of airstrikes when confronted with superior numbers of insurgent fighters’ (the same situation the Ground Force Commander (GFC) thought his forces faced in Khod).

Second, Operation Enduring Freedom was governed by Rules of Engagement that permitted a much lower threshold for employing lethal force than ISAF and, crucially, permitted ‘anticipatory self-defense’ (which was why the GFC called in the airstrike). [59]

Although they shared the same commander – Gen Stanley McChrystal – OEF’s chain of command was independent from ISAF’s, and their mandates were different: OEF was a counter-terrorism operation with combat as its leading edge, whereas ISAF was charged with pursuing a counterinsurgency strategy that, since 2008, had been directed at ‘winning hearts and minds’ as much as conducting fire missions. [60] To be sure, they were not wholly separate. The lines between counter-terrorism and counterinsurgency were inevitably blurred; US forces served in both ISAF and OEF; and there was necessarily co-operation between the two. For Operation Noble Justice ISAF’s Regional Command–South (RC-S) provided resources from its Forward Operating Base (FOB) Ripley 20 km south of the provincial capital at Tarin Kowt: the combat helicopters called in by the Special Forces to strike the three vehicles came from there, and Regional Command–South also supplied helicopters for medical evacuation from there and provided medical treatment for the casualties at military hospitals there too.

But these were all emergency responses, and Regional Command–South had neither been involved in the planning of Operation Noble Justice nor kept in the loop as it unfolded. Its British commander, Maj Gen Nick Carter, made his views crystal clear: ‘It’s unacceptable that someone can shit on my doorstep and sit back and watch me mop the shit up’ (p. 579). [61] In short, the operation at Khod was superimposed over but, until something went wrong, conducted largely outside the matrix of other military operations. The need to avoid one mission confounding another was supposed to be addressed through weekly joint briefings, but although Regional Command–South’s Operations Center at Kandahar Air Field was just 600 meters from SOTF-South’s Operations Center, Carter complained that there was no functional connection between the two (p. 580). [62]

This disjuncture set the parameters within which Operation Noble Justice took place, but it must have affected the mental landscape within which ODA 3124 operated too. Since 2006 ISAF’s lead force in Uruzgan had been the Dutch, whose headquarters at Kamp Holland – a vast compound adjacent to FOB Ripley – included a Provincial Reconstruction Team whose activities centred on small-scale aid programs in two or three districts (‘ink-spots’) 25 km or more south and east of FB Tinsley. The Provincial Reconstruction Team had achieved a precarious success, but there was little sign of the ink-spots coalescing, and the Special Forces cannot have been alone in thinking that the Dutch were only able to control their fractured and limited ‘white space’ because the Special Forces kept the Taliban outside them. [63]

ODA 3124’s last combat rotation had been from January to August the previous year, when they had been deployed in the same part of Uruzgan (p. 985). ‘We were the only ODA out here last time,’ their captain explained, and they had been involved in 22 separate exchanges of fire with the Taliban (p. 1359). Since their return from the United States on 15 January, just over a month earlier, they had already survived two more bruising encounters with the Taliban. Petit described them as ‘the single most active ODA we have’, and he told McHale ‘they have conducted more operations and have a more full-spectrum understanding than any other ODA on the battlefield’ (p. 1095). [64] They knew the area around Khod and they knew too that when another ODA conducted an operation there in November it had been involved in a two-day fire fight with the Taliban and uncovered home-made explosives, weapons caches and IEDs.

This was a seasoned team, and their captain – who acted as Ground Force Commander (GFC) in overall charge of the joint US-Afghan operation in Khod – was a veteran of three Afghanistan tours. Yet even he was apprehensive; on a previous mission his team had been ambushed as soon as they left the helicopters and five of his soldiers had been wounded (p. 930). The JTAC, an Air Force technical sergeant who was assigned as relay between the GFC and the pilots of the supporting aircraft, was jumpy too. He also had three tours under his belt, and had been back in Afghanistan since the middle of September. Since this was his last mission before heading home his ‘level of anxiety was high’ (pp. 1388, 1499), he told McHale, and he had survived enough remote-controlled IED attacks at FB Ripley that he fully expected to be hit by another one ‘after coming off the birds’ (p. 1485).

0300

Their fears were not immediately realised, but the signs were ominous. The priority on touchdown was to establish radio communications with the ODA’s battalion headquarters, SOTF-South, and at 0305 the GFC initiated a stream of summary observations of insurgent activity (called SALT reports: ‘Size, Activity, Location, Time’) – to SOTF-South’s Operations Center at Kandahar Air Field. [65]

As soon as his forces started to establish a cordon and to secure supporting fire positions on the bluffs overlooking Khod they could see through their night-vision goggles shadowy figures perching on roofs, jumping over walls and into compounds or ducking into the cover of the irrigation ditches. There was little the GFC could do about it, apart from setting Afghan army and police patrols moving through the alleys, because he was not authorised to initiate the search until 0615: this was a daylight operation not a ‘night raid’. [66] The restriction followed from its classification as a level 1 mission. ‘We didn’t have the intelligence to get a level 2 rating’, the Fires Officer at SOTF-South explained, but that added a layer of difficulty – and danger – because the MH-47s only flew between dusk and dawn so that ‘the team had to do an INFIL [infiltration] at night’ and then ‘sit there and wait for first light’ (p. 720). [67]

It must have been a nerve-wracking wait. The Special Forces were using multi-band inter-team radios to communicate with one another, and while they waited they set them to scan for other transmissions in the immediate area. The GFC had a local interpreter to help co-ordinate the Afghan forces who were with his own men and to interpret any intercepted communications (ICOM). Almost immediately messages were picked up urging the mujahedeento gather for an attack, and the volume of ICOM rapidly increased.

Spotters had ensured that the Taliban had advance warning that the helicopters were on their way, and much of the chatter involved calls for reinforcements from the villages. At 0325 the GFC reported that the Taliban were ‘moving up from the south with heavy weapons and reinforcements’ (pp. 1889, 1987), though the source of the information was not given. The JTAC (call sign JAG25) was communicating with the aircraft on station via his line-of-sight VHF radio – from time to time he switched to the inter-team band to provide operational updates – and he passed the ICOM frequencies to the commander of the AC-130 gunship that was now circling over the bazaar in support of the ground operation. Its Battle Management Center had more sophisticated electronic equipment to eavesdrop on the Taliban, and its Electronic Warfare Officer had a Pashto linguist on board as a Direct Support Officerto help monitor what was happening. [68]

There were almost certainly other sources of electronic intelligence too, because the Predator that was also circling overhead in support of the mission was equipped with an Air Handler which controlled an on-board device that intercepted and geo-located wireless communications, including cell phones (p. 907). [69] This raw signals intelligence was processed by an exploitation cell, probably from a National Security Agency (NSA) unit forward deployed at Kandahar Air Field, which would have entered its findings into one of the mission chat rooms (p. 589): but any evidence of its (classified) role, if any, is concealed by the report’s redactions. [70]

Although the Taliban knew that the mission had air support, they were more exercised by the aircraft’s firepower than their capacity to intercept communications. Several hours later the Predator’s Mission Intelligence Coordinator (MC) reported that somebody – the source is redacted but it may have been the NSA cell; the information had been posted in their mission room – had picked up that the insurgents were ‘aware of coalition forces eavesdropping on VHF’, and shortly afterwards he expressed his surprise that there was still so much ICOM. ‘These guys are Chatty Cathy’s,’ he told the rest of the crew, and for people who knew their frequencies were being monitored ‘they’re sure talking a lot on them’. [71] Less than an hour after touchdown – long before dawn – a radio message instructed the insurgents to wait until the aircraft had left before attacking, and the GFC attempted to draw the Taliban out from their hiding places by having the AC-130 feint going off station. As soon as it did so further orders were intercepted imposing radio silence ‘in preparation for possible attack’ (p. 1990). But nothing came of it, and at 0440 another message instructed the Taliban to ‘hide weapons and wait until morning when the aircraft will be gone’ and they would be able to see the coalition forces more clearly (p. 1990).

The respite was short-lived. The GFC’s second-in-command, who was overseeing the party establishing a supporting fire position on high ground to the south of Khod, reported seeing headlights flashing from the north. They seemed to mirror lights flashing from the south – ‘a mark and a reciprocate’ (p. 1344) – and the JTAC moved the AC-130 away from its orbit over the village to investigate.

0445

The GFC clearly regarded this sighting as a threat because at 0445 the transcript of his SALT report noted that ICOM chatter indicated ‘AAF elements [Anti-Afghan Forces, i.e. the Taliban] are moving in two groups, one to the north and one to the south, in an attempt to surround CF [coalition forces]. Stating that this is “our” area and we cannot afford to allow CF to operate in this area or we will lose local support’ (p. 1891; my emphasis). [72]

The possibility that the Taliban planned to surround coalition forces – to trap them in Khod – marked what turned out to be an ever-present horizon of concern.

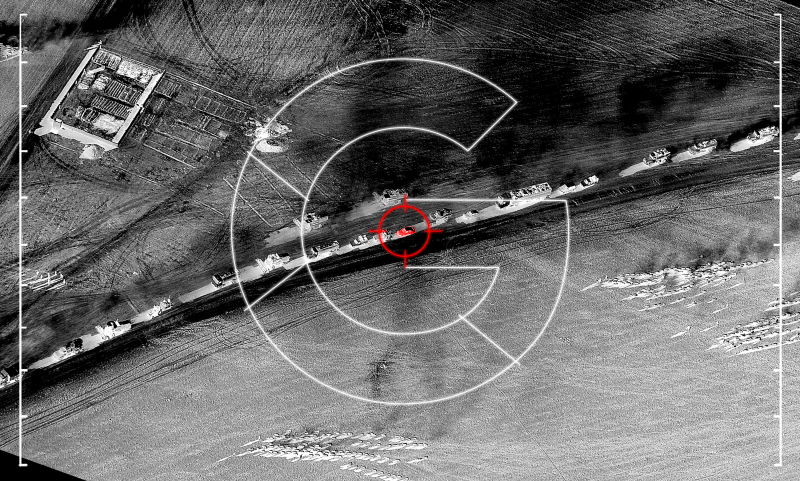

At 0454 the commander of the AC-130 said they had found the northern headlights: he told McHale that ‘looking through the [infra-red] sensors it appeared to be trucks full of hot spots’ (p. 1418). There were three vehicles, roughly five to six kilometres (three to four miles) from the nearest coalition forces at Khod, and the AC-130 started to track them as they moved south.

The JTAC immediately responded ‘those vehicles are bad’ but added that they would have to work on ‘trying to get enough to engage’ – a reference to positive identification of a legitimate military target (PID) [73] – and that ICOM chatter suggested that a Taliban ‘Quick Reaction Force’ was coming in. The flight commander told him there appeared to be ’unlawful personnel’ in the back of the vehicles (his emphasis); he gave no reason for regarding them as ‘unlawful’. A few minutes later he reported that the third vehicle had gone and was no longer a factor, but the JTAC was already thinking ahead to an airstrike. At 0503 he announced he was ‘pretty sure we are covered under [ROE] 421 and 422’ – that the Rules of Engagement would allow an airstrike – on the grounds of ‘hostile intent [and] tactical maneuvering.’ [74] The basis for inferring ‘hostile intent’ was the ICOM chatter, which prompted the JTAC to declare the occupants of the vehicles were ‘setting themselves up for an attack’, but the evidence of ‘tactical maneuvering’ was unelaborated. The JTAC knew this was a key term, one of the requirements laid down by the ROE for a pre-emptive strike (p. 1492). It is defined as movement to gain tactical advantage, but the only visual information the JTAC had was that the vehicles were heading in the general direction of Khod. He chose to interpret this as ‘maneuvering on our location’, which must have been an extrapolation from the ICOM chatter, and at 0506 he relayed the GFC’s request for the gunship to ‘engage with [containment] fires forward of their line of movement.’

Even though containment fires would not necessarily involve a direct attack on the vehicles, the commander of the AC-130 was reluctant. The resolution of his aircraft’s infra-red sensors was sufficient to identify ‘hot spots’ but not the composition of the passengers – following standard military protocol, the key diagnostic was gender and age: the presence of women and children [75] – and so he asked if the MQ-1 Predator that was circling over a compound in Khod could ‘take a look at these people’.

The GFC agreed and the JTAC advised both aircraft that ‘we are going to hold on containment fires and try to attempt PID, we would really like to take out those trucks’ (my emphasis): a course of action that clearly implied a preference for the use of lethal force against the vehicles rather than merely impeding or curtailing their movement. One vehicle had stopped at a compound on the west side of the river, while the other remained on the east, and the commander of the AC-130 reported that they were now flashing their lights and signalling to each other. He sent the co-ordinates to the Predator crew via mIRC, and used his laser target designator (‘sparkle’) to mark the location, until at 0509 the Predator pilot (call sign KIRK97) reported they had ‘eyes on’ one of the vehicles, ‘personnel in the open, definite tactical movement.’ The commander of the AC-130 was in a hurry to talk the Predator on to the second vehicle because his aircraft only had about 20 minutes loiter time left. This increased the uneasiness of the GFC and the JTAC, and at 0512 the JTAC radioed (again the emphasis is mine): ‘Need to destroy all these vehicles and all the people associated with them, we believe they are bad.’

Before they could do that, however, he reminded both pilots that they had to ‘do the best we can to get PID.’ [76] The JTAC was particularly exercised by the possibility of mortars in the back of the vehicles. He knew the local Taliban had used them in the past, and when he asked the Predator crew to search for them the sensor operator duly called ‘possible mortars’ on the intercom, though the pilot’s radio message to the JTAC at 0513 was more qualified: ‘personnel in the open, by the vehicles, moving tactically, definitely carrying objects, at this time we cannot PID what they are.’

In these early exchanges the Predator pilot followed the JTAC in describing what the people on his screen were doing as ‘tactical movement’ – not once but repeatedly – and his unprompted gloss on what was a vital phrase in the identification of a legitimate military target is instructive. ‘Tactical movement’ meant they were ‘moving tactically,’ he told McHale, ‘as opposed to moving in a random manner that you would expect normal civilians to move or drive’ (p. 908; my emphasis). This is a revealing observation for two reasons.

First, it begs the question of what counted as ‘normal’ to American eyes. The interpretation of cultural difference is not a constant but varies over time and space. Interviews with coalition troops in Afghanistan have suggested that those ‘new to an area and unfamiliar with what “normal” was, were more likely to read hostile intent into otherwise innocuous actions.’ But familiarity did not breed content, and towards the end of a tour ‘soldiers became less willing to assume risks and more likely to err on the side of using force to protect themselves in ambiguous situations.’ Their responses were also affected by a sort of areal essentialism, in which the actions of individual Afghans were read off from and reduced to the pre-existing characterisation of the area in which they took place. That gesture took several different forms, the product of a varied combination of experience, prejudice and mission intelligence; specifically in this instance troops who had recently incurred losses, especially in ‘combat-heavy areas’, were found to be ‘more likely to respond aggressively’ in such situations. [77] The relevance of these findings to the Uruzgan attack is necessarily conjectural; McHale was repeatedly told that ODA 3124 ‘knew’ the area, but they had experienced several violent encounters with the Taliban there in the recent past and, like other military personnel, they knew it as what the Fires Officer at SOTF-South called a ‘pretty bad place’ (p. 721). ‘We had plenty of knowledge that this was a bad area,’ he told McHale, ‘from people being there before’ (p. 724). The operation at Khod was the last mission of the JTAC’s tour, but it is impossible to say whether this influenced his reading of the situation – which was in any case heavily dependent on the interpretations provided by the Predator crew and the screeners whose familiarity with the area was at best indirect and limited to a largely visual field.

Second, and following directly from that last limitation, the Predator pilot’s appeal to an (unspecified) ‘normal’ effectively passed the burden of being identified as a civilian to those caught in the Predator’s field of view. The issue of civilian status is contentious, to be sure, and the reference to ‘normal civilians’ serves as a reminder that many in the military (and beyond) insist that the Taliban are civilians too. [78] But here – and elsewhere – the indeterminacy of civilian status is compounded by the priority given to the visual register. Those framed by the Multi-Spectral Targeting System did not know they were under surveillance, still less that they were suspected of being Taliban – they only became dimly aware of the Predator when they stopped at dawn to pray – and even had they known, what would possibly have constituted an adequate performance of ‘civilian-ness’ by those in front of the lens to those hidden behind it? In short, since the occupants of the vehicles were all ‘normal civilians’, what could they possibly have done differently that would have spared them from being attacked? [79]

These considerations had a heightened significance because the JTAC and the GFC were wholly dependent on their ‘eyes in the sky’ for information about the progress of the vehicles and the activities of their occupants. The aircraft greatly extended the direct field of vision of the Special Forces on the ground, even of the fire parties on the bluffs, but this prosthetic effect was circumscribed in two important ways. First, while the commander of the AC-130 told the JTAC that the resolution of its infra-red sensors was not enough to make out the composition of the occupants, the full-motion video (FMV) feed from the Predator was limited too, even when the sensor operator switched from infra-red to colour once the sun came up. Its clarity depended in part on the ability of the sensor operator to focus the cameras – which could be confounded by cloud or dust (p. 1405) – and as the image stream was compressed to accommodate bandwidth constraints and then distributed across multiple networks so its quality was degraded and, significantly, varied from place to place. It was never crystal clear. [80] The best imagery was available at the Ground Control Station at Creech; even the screeners complained about what they had to work with. The primary screener testified that ‘with our FMV quality [which ‘isn’t that great’: p. 1392] there is only so much analytical [work] we can do’ (p. 1390). Other observers at other posts in the United States and in Afghanistan had similar difficulties, compounded by the video feed sporadically freezing and even breaking.

Equally important, for most of the time the image was restricted to a chronically narrow field of view. ‘We don’t pass any information [about the composition of the passengers],’ the Safety Observer told McHale, not altogether accurately, ‘because we don’t know ourselves. We are looking through a soda-straw at it’ (p. 1453). [81] Early in the mission the sensor operator had decided to ‘go with the pickup [the lead vehicle] as a primary unless we get directed otherwise’, and the primary screener explained that ‘because of our [field of view] and having three vehicles we only had the front pick-up and part of the second vehicle in frame most of the time’. Whenever the vehicles stopped the sensor operator would ‘zoom out and see all three vehicles,’ she added, ‘but we could not see the passengers unless they got out’ (p. 1394). Even then It was difficult to say much about them from the black and white infra-red imagery that streamed from the Predator throughout the night (and the sensor operator frequently reverted to IR after dawn too). ‘It is not like you are staring from me to you across the room,’ the Safety Observer told McHale, when ‘I can give you a detail[ed] description. They had been following them all night, and IR … is black and white, and hot and cold’ (p. 1456). As Lisa Parks notes, ‘aerial infra-red imagery turns all bodies into indistinct human morphologies that cannot be differentiated according to conventional visible light indicators,’ a process of indistinction that is in fact doubled because forward-looking infra-red (FLIR) images are by their very nature ‘distorted beforea satellite link digitally processes them’ – and degrades them further – so that even at its highest magnification the video feed would have neared 20/200 visual acuity: ‘the legal definition of blindness for drivers in the United States’. [82]

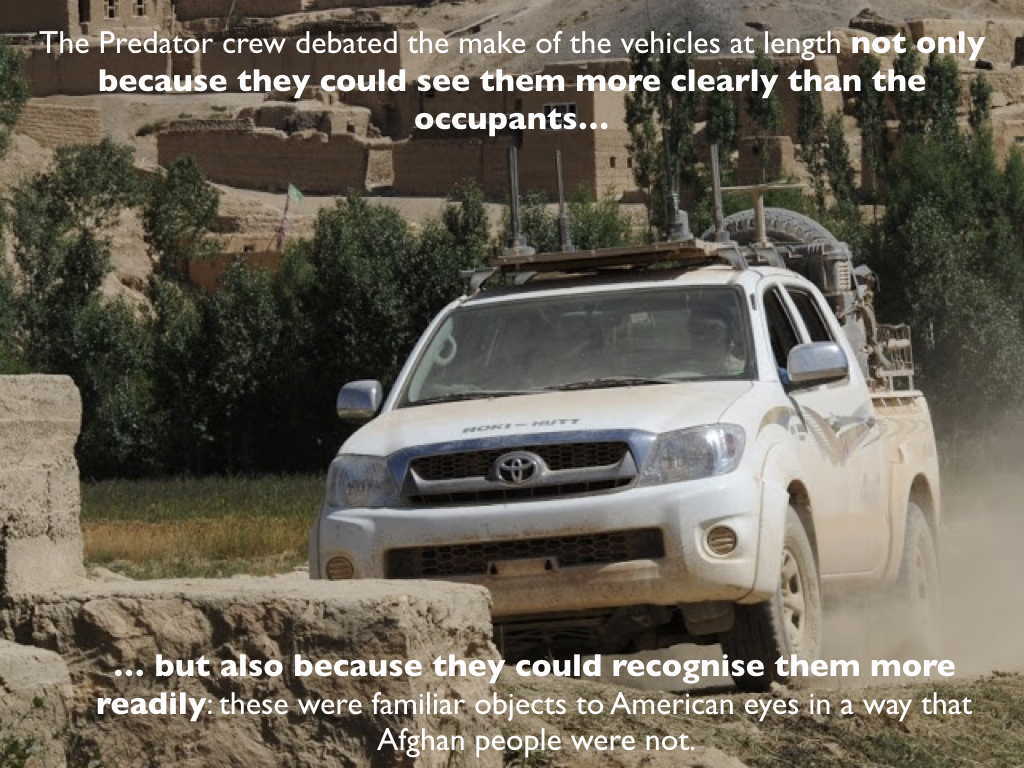

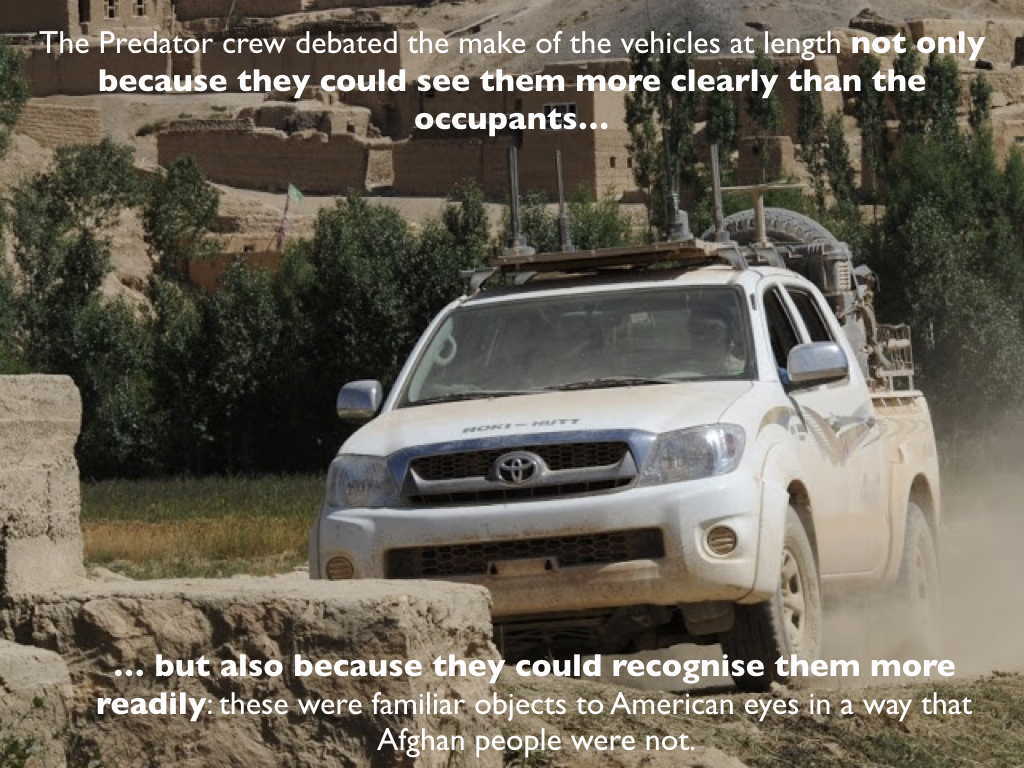

In fact when the sensor operator zoomed in the most extended discussions amongst the Predator crew focused on the make of the vehicles not the make-up of their occupants. They started before the sun came up. ‘What kind of truck is that?’ the MC wondered. ‘Definitely not a Hummer, right?’ the Predator pilot responded. ‘No,’ the sensor operator told them, ‘just a regular SUV, it’s got a roof rack, kind of boxy and bulky, maybe a Toyota…’ Ten minutes later he resumed the discussion: ‘OK, that’s a Chevy Suburban… well, maybe it’s not quite as long, it’s got barn doors in the back, I want to say it’s a Suburban, well it almost doesn’t look long enough, could be a barn-door [Chevrolet] Tahoe, it’s definitely full size, and I don’t see many full-size out here other than Chevys…’ Two hours later they were still debating. ‘See that white top on that thing?’ asked the sensor operator, ‘It’s removable … squarish front end… that’s a Suzuki, isn’t it?’ The MC disagreed – it was too big – but 40 minutes later they returned to the subject again. The pilot thought it ‘kinda looks like a jeep,’ but the sensor operator reckoned that if so it was ‘an odd variant… a lot older one…’ The pilot then changed his mind – it was more ‘like a Tahoe’ – only for the sensor operator to change his mind too: ‘the bumper hanging out in front like, you know, screams jeep,’ he said, and when he got ‘a good shot of the door closing’ he was convinced ‘it looked like a jeep.’ They both noted the ‘seven-slot grill’, and the conversation switched to the Toyota – now they could make out the model name – and the sensor operator pointed out that one of the SUVs had ‘the same type of lug pattern’ (p. 1956). The discussion was inconclusive and they returned to it in short order. ‘The more I look at it,’ the sensor operator said, the SUV ‘resembles a Ford Explorer, like the mid-90s type, more square-boxed type, probably early 90s…’ ‘The hood doesn’t quite match up with the lines,’ he continued, ‘but the windows certainly do. The windows and doors look just like a Ford Explorer’ (p. 1961). It seems clear that the Predator crew debated the makes of the vehicles at length not only because they could see them more clearly than the occupants but also because they could recognise them more readily: these were familiar objects to American eyes in a way that Afghan people were not. The trade-off between field of view and resolution then mattered all the more because once the sensor operator zoomed in on the lead vehicle, which was a Toyota Hilux – they all agreed on that – its occupants, mainly jammed in the back of the pickup and exposed to the aerial gaze, were all male. The passengers in the other two vehicles included women and children. [83]

Second, the ability of the JTAC and GFC to visualise the situation was limited by their lack of a ruggedized laptop that would have provided direct access to the FMV feed from the Predator. The GFC said they had elected not to bring a ROVER (Remotely Operated Video Enhanced Receiver) with them ‘because we have [a] Predator with two analysts that can look with colour on a ten-foot screen’ whereas – the redactions now cut in but they evidently revolved around the limitations of the ROVER – it’s ‘just not feasible’ for a mission that had ‘to move tactically in a mounted manner’ on all-terrain vehicles (p. 936). [84] Matters were more complicated, however, because although a ROVER would have been the closest ground receiver to the Predator, its reception would have been far from perfect. Even when a ROVER is mounted on an ATV, another JTAC explained, it relies on a direct line-of-sight link with the aircraft, and so ‘it’s in and out, intermittent, and it’s really scratchy.’ With the small, portable ROVER 5 ‘you have to have it nice and flat, any buildings, trees obstruct the signal’ and during the day with the sun glaring on the screen ‘you are basically looking at your face, it’s a huge mirror.’ He claimed that in the Operations Center at Kandahar Air Field SOTF-South had ‘huge TVs, [and] they can see a crystal clear picture because they get it directly from the satellite’ (p. 1532). This was an exaggeration; reception at Kandahar may have been better, and their screens were certainly bigger, but the imagery was far from clear there too. ‘It was not true that we had a significantly clearer picture,’ Petit told me, and ‘in fact both the SOTF and the GFC tended to rely on the interpretive text chat [mIRC]’ from the Predator crew and the screeners ‘more than our scratchy picture’. And, as I’ve explained, the screeners had far from clear imagery too. Vision is clearly more than a bio-technical capability – it is always culturally mediated – but that does not mean that its technical frames are unimportant. Whether the FMV feed would have given the JTAC and the GFC a better visual sense of the situation is perhaps moot; but a ROVER would also have given them access to multiple mIRC chatrooms used by the Predator crew and other observers; so too would a data-enabled satellite phone, but the JTAC confirmed that ‘we [did] not see the mIRC chat on the ground’ (p. 1488). These were all serious limitations, but whatever difference the presence of a ROVER might have made the Predator crew remained wholly unaware of its absence, and this mattered because it made the JTAC unusually reliant on the pilot’s verbal ability to paint the developing picture as accurately as possible. [85] This in turn made their choice of words vital. Nasser Hussain was right to remind us:

‘There is no microphone equivalent to the microscopic gaze of the drone’s camera. This mute world of dumb figures moving about on a screen has particular consequences for how we experience the image…. In the case of the drone strike footage, the lack of synchronic sound renders it a ghostly world in which the figures seem un-alive, even before they are killed. The gaze hovers above in silence. The detachment that critics of drone operations worry about comes partially from the silence of the footage.’ [86]

That rings true, in so far as those watching the FMV feed were watching a silent movie, and apart from the JTAC’s radio and its ambient noise the only sound from the ground reached them indirectly and textually via ICOM and its mIRC translation. But any ‘detachment’ was repeatedly overcome by the exchanges with the JTAC – the pilot, who had more than 1500 hours flying Predators, told McHale that ‘I do experience stuff along with the guys on the ground… I am in that situation along with them, just trying to support them the best I can’ (p. 916) [87] – and, as a direct result of that intrinsically asymmetric intimacy, by the subtitles added by the Predator crew that turned the objects of their gaze into marionettes and mannequins. [88] Sometimes they even issued imaginary instructions to those they were watching. There was of course no way for them to know how accurate their ventriloquism was, and yet it materially shaped the decision field of the GFC. As one senior officer explained, ‘the guy on the ground … has to trust what he is being told because he cannot see the convoy’ (p. 872). [89]

These verbal communications were affected by technical issues too. Radio messages between the AC-130 and the Predator were disrupted from time to time by static, but mIRC between the two was ‘intermittent most of the time’ as the gunship repeatedly lost connectivity (p. 1420). The JTAC’s communications with the Predator pilot depended on his line-of-sight VHF radio, and the link became weak or even broke altogether depending on the path of the aircraft and the intervening terrain (p. 1487). [90] Even so, the JTAC saw himself as a faithful relay giving the GFC ‘an exact play of what the aircraft [were] seeing’ (p. 1487); in turn, the Predator pilot praised the JTAC for ‘doing a good job telling us what the [GFC] is thinking’ (p. 1954). Their co-construction of the developing situation depended on the correlations they established between the reports on the vehicles from the aircraft and ICOM chatter – although the location of the messages could not be established unless the NSA cell came through – and the GFC testified that ‘for every [insurgent] radio transmission there was an equal ground reaction during the entire movement’ (p. 1363). [91] This interpretive work, the performative statements shared with the commander of the gunship and the crew of the Predator in particular, was also profoundly culturally mediated – hence the informed discussion of the vehicles rather than their occupants – and differed from person to person. Even had the Predator’s FMV feed been uninterrupted, the JTAC had a ROVER laptop with perfect reception, and the image stream that appeared on multiple screens across the network been high-definition and crystal-clear, this could not have rendered the battlespace transparent. As the Director of the Combined Joint Special Operations Task Force (CJSOTF–A)’s Operations Center at Bagram Air Field – the level above SOTF-South – acknowledged, ‘What I see may be different from what someone else might interpret on the ISR’ (p. 822).

There were differences in interpretation that became ever more important as the night wore on, but at this time the commanders of both aircraft agreed the situation was becoming extremely serious, and yet both were on the brink of having to withdraw – for real this time: the very situation ICOM chatter revealed the Taliban were waiting for. The Predator had been re-tasked to Marjah in Helmand province 150 miles to the south, where Operation Moshtarak, a large-scale counterinsurgency offensive involving 15,000 coalition troops, had been under way for a week or more, but the pilot had held off as long as possible and he asked his MC to explain that ‘we are part of a tactical engagement right now and we can’t move.’ [92] While they waited for permission to remain on station the crew continued to watch their screens, and the pilot told the JTAC they could see ‘personnel in the open, by the vehicles, moving tactically’ – again – ‘definitely carrying objects: at this time we cannot PID what they are.’ [93]

The AC-130 had flown down from Bagram Air Field to escort the Chinooks to Khod, and although its commander had received a waiver ‘to take us all the way to low fuel’ (p. 1419) he knew the time was fast approaching when he would have to go off station too. [94] He clearly believed they had sufficient information for a strike, and at 0513 he prompted the JTAC: ‘How’s your imagery looking?’ He received no reply – presumably because the JTAC had no imagery – and one minute later he increased the pressure. ‘We’re all set up, up here,’ he told the JTAC, ‘standby your intentions for fire mission.’ The JTAC told McHale he knew exactly what the flight commander meant: ‘They have confirmed and they think they had enough to fire and are ready for my call’ (p. 1354). He responded with a promissory note: ‘Ground Force Commander’s intent is to destroy the vehicles and the personnel.’ The JTAC also relayed the Predator crew’s identification of individuals ‘moving tactically’ and holding ‘cylindrical objects in their hands.’ A redacted passage may explain how they had become ‘cylindrical’ in the interval, but the crew was still not certain what they were and this did not constitute the positive identification of weapons they were looking (and hoping) for. ‘Is that a [fucking] rifle?’ demanded the Predator pilot, and when the sensor operator was unable to confirm it –largely because of the limitations of infra-red (‘Maybe just a warm spot from where he was sitting,’ the sensor operator explained: ‘The only way I’ve ever been able to see a rifle is if they move them around [or] … with muzzle flashes…’) – the pilot muttered ‘I was hoping we could make out a rifle…’

The commander of the AC-130 was undeterred and announced that ‘our intention is to engage first on the east side’ – because the people there were closer to the compounds and could escape more easily [95] – so he was happy to maintain ‘the chain of custody’ (a leading phrase) while the Predator continued to track those on the west side. By this time he reckoned the vehicles were 7.8 km (almost five miles) from the nearest coalition forces in Khod. But this was a straight-line distance and, as the GFC later conceded, ‘you cannot do a straight-line distance’ in terrain like this (p. 1350). It would have taken them some considerable time to reach the village on the poor, unmade tracks that criss-crossed the mountainous region, and this evidently gave the JTAC pause too: ‘… but with the distance they are away from our objective…’ he radioed at 0522. The rest of his message was drowned by simultaneous transmissions between the aircrew, but the JTAC was clearly convinced that the occupants of the vehicles were planning to attack. ‘Getting ICOM traffic,’ he continued, ‘and [combined with] the maneuvering of these personnel, we believe their ultimate intent is to come down in this area and engage friendlies’.

But now the JTAC’s previous confidence deserted him. ‘At this point,’ he conceded, accepting and relaying the doubts of the GFC, ‘the current rules of engagement don’t fit’. The reluctance to engage was prompted by both the failure to identify weapons and the distance of the vehicles from Khod (which had a direct bearing on the imminence of any threat they posed). As the aircraft continued to track the vehicles the quest for weapons did not let up. The Predator pilot later told McHale there were ‘probably 200 instances where we saw basically long cylindrical objects’, which he said they always looked for because they could be rifles or rocket-propelled grenade launchers. He then radically revised the numbers, estimating that they saw ‘about 30’ weapons in total, before conceding that there had been only one (perhaps two) definite calls of weapons from the screeners watching the FMV feed at Hurlburt Field (p. 910). In fact McHale’s report noted that ‘throughout the over three and a half hours the vehicles were observed, only three weapons were positively identified’ (p. 23) – whether these were different weapons or the same weapon identified three times is unclear; the primary screener thought the most they saw at any one time was two weapons (p. 1389) – and that the screeners made only one call that was not prompted by the Predator crew (p. 33). Just as important, the crew’s inference could perfectly properly be reversed: AK-47s are commonplace in Afghan culture, and to have detected so few weapons among so many men was hardly evidence that the Taliban were massing at the gates. [96]

It did not take the Predator crew long to adduce further grounds for suspicion. The sensor operator reported a group of people whom the pilot said ‘look to be lookouts’. They probably were lookouts, since the adults knew they were in Taliban country; their understandable unease would explain why the men ‘appeared to provide security during stops’ (p. 36). [97] But the same flawed logic that interpreted the presence of any weapons as an indication of impending attack transformed a prudent precaution by wary travellers into a sign of a ‘threat force’ (p. 36). The faulty inference was encouraged by ICOM chatter in which a Taliban commander instructed his men to move towards the bazaar in Khod. This was immediately linked to the vehicles: ‘What we’re looking at,’ the JTAC relayed at 0524, elaborating his earlier claim, ‘is a QRF [Quick Reaction Force]; we believe we may have a high-level Taliban commander…’ The GFC later explained that half a dozen HVIs or ‘High-Value Individuals’ – targets on the Joint Prioritised Effects List [98]– were active in the area around Khod, moving between safe houses (or ‘bed downs’), and at first he suspected that the men jammed into the lead vehicle were the HVI’s ‘personal security detail’ (pp. 1356-7). The Predator crew had already noticed one person standing apart from the others, and prompted by the JTAC’s inference the pilot speculated that this was ‘one of their important guys, just watching from a distance’and the sensor operator chimed in with ‘he’s got his security detail.’ When the commander of the AC-130 asked them to turn their sensors towards a group of people on the other side of the river the Predator pilot reinforced that interpretation: they still had eyes on a group of men on the west bank, he radioed, with ‘two lookouts, could be definite tactical movement with a commander overwatching, definitely suspicious.’

The GFC said he came to discount the presence of an HVI, and his primary and eventually sole concern was the threat of an enveloping attack on his forces at Khod. [99] The JTAC was still on the same page as the two flight commanders. ‘Due to distance from friendless we are trying to work on justification’ for a pre-emptive strike, he told them at 0526: it was essential to establish the presence of weapons. By now people could be seen leaving the compound and squeezing back into the pickup and the SUV, and the JTAC radioed: ‘ICOM traffic [suggests] they are getting on the vehicles and moving to our location, sounded like it was in conjunction with what you are looking at.’ The commander of the AC-130 thought so too. ‘What we all took note of in the aircraft,’ he told McHale, ‘was the [ICOM] chatter rallying forces to head towards the objective [Khod], at that moment they all stopped what they were doing and started piling in to the trucks’ (p. 1418). The JTAC believed they now had sufficient evidence to engage (p. 1491), but the GFC remained concerned that the vehicles were too far from Khod to justify a strike and that they had yet to establish PID (which most them consistently misinterpreted as a sufficient number of weapons to constitute the vehicles as a legitimate target). [100]

NOTES

[47] For additional insight into operations in and around FB Tinsley (formerly FB Cobra), see Steve Haggard’s Inside the Green Berets (Brave Planet Films/National Geographic, 2007).

[48] An ODA (‘the A Team’) is the basic operational unit of US Special Forces. It is commanded by a captain, assisted by a (chief) warrant officer, and includes pairs of specialists in (for example) weapons, engineering, explosive ordnance disposal, communications, and medical support – pairs so that an ODA can operate in two teams of six (as here). In the US military ‘Special Forces’ refers to groups under US Army Special Forces Command (‘Green Berets’); the generic ‘Special Operations Forces’ includes any special operations group (including the Green Berets and also groups from the US Air Force, Navy and Marines and groups that operate under the still more secretive Joint Special Operations Command), all of which fall under the wider umbrella of US Special Operations Command.

[49] There were more than 4,000 ha devoted to poppy cultivation in Shahidi Hassas in 2008. The Taliban’s attitude to its cultivation was complex and increasingly pragmatic. By 2009 there was ‘a consensus that growing poppy is religiously proscribed, yet taxing cultivation and trafficking is justified by war imperatives.’ By then the Taliban had ‘a tolerance of opium [production] that for some commanders border[ed] on dependence’: Addiction, crime and insurgency: the transnational threat of Afghan Opium (UN Office on Drugs and Crime, October 2009) pp. 86-7, 102.

[50]Cf. Erik Donkersloot, Sebastiaan Rietjens, Christ Klep, ‘Going Dutch: counternarcotics activities in the Afghan province of Uruzgan’, Military Review91 (5) (2011) 43-51.

[51] Jairus Grove, ‘An insurgency of things: foray into the world of Improvised Explosive Devices’, International political sociology19 (2016) 332-51; idem, Savage ecology: war and geopolitics at the end of the world(Durham NC: Duke University Press, 2019) 113-137.

[52] The government of Pakistan prohibited the export of CAN, since its manufacture was subsidized by the state, and the government of Afghanistan outlawed its use because it was the core ingredient for 70-90 per cent of IEDs: Ben Gilbert, ‘Afghanistan’s Hurt Locker’, Agence France Presse, 10 February 2010; Chris Brummitt, ‘Pakistan fertilizer fuels Afghan bombs, US troop deaths’, Associated Press, 31 August 2011.

[53] For a vividly personal account of one man’s attempt to track down one manufacturer of IEDs in Afghanistan, see Brian Castner, All the ways we kill and die: a portrait of modern war (New York: Simon and Schuster, 2016).

[54] All pre-planned missions required a Concept of Operations (CONOPS) to be submitted for approval by the ODA’s higher command(s); this was a deck of Powerpoint slides developed by the ODA outlining the time, location and type of operation, the level of risk and the resources required. In Afghanistan level 0 was the lowest risk, usually reserved for a combat reconnaissance patrol outside the firebase; level 1 was medium risk, typically a daylight cordon-and-search operation (as here), that might require helicopter transport and Intelligence, Surveillance and Reconnaissance (ISR) support; and level 2 was high risk, usually a night raid that required helicopter transport and ISR. The higher the risk, the higher the approval level required: Maj Edward Sanford, ‘Optimizing future operations for Special Forces battalions: reviewing the CONOP process’, Naval Postgraduate School, Monterey CA, June 2013 (p. 12).

[55] Anthony H. Cordesman and Jason Lemieux, ‘IED metrics for Afghanistan, January 2004 – May 2010’, Center for Strategic and International Studies, 21 July 2010; the original data were provided by the Department of Defense’s Joint IED Defeat Organization (JIEDDO).

[56] The consolidated file refers to both SOTF-South and SOTF-12 but they are the same unit. SOTF-12 identified the 2ndBattalion, 1st Special Forces Group (Airborne),but during this tour all SOTF numbers in Afghanistan were replaced by regional designations.

[57] Elsewhere Petit emphasised the role Special Forces played in ‘village stability operations’ but the weight of his argument was on establishing a ‘dominant’ coalition presence to supplant the coercive authority of the Taliban through the provision of an effective system of ‘security and justice’. This was the essential foundation for development, he explained, but it depended on responding to Taliban incursions with ‘speed, violence of action, and effective but discretionary use of indirect fires’: ‘The fight for the village: Southern Afghanistan, 2010’, Military Review 91 (3) (2011) 25-32.

[58] Operation Enduring Freedom was the official name for the ‘global war on terror’ launched by the United States in response to 9/11, but it is commonly reserved for US combat operations in Afghanistan, 2001-2014.

[59] Human Rights Watch, “Troops in Contact”: Airstrikes and civilian deaths in Afghanistan(September, 2008) p. 37; on the Rules of Engagement see also note 74 below.

[60] Derek Gregory, ‘The rush to the intimate: the cultural turn and counterinsurgency’, Radical Philosophy150 (2008) 8-23.

[61] ‘NATO forces have complained that OEF operations in their region are not communicated to them, but civilian casualties from airstrikes called in by OEF forces are left for them to address’: Human Rights Watch, Troops in Contact, p. 31. Brig Gen Reeder insisted that the operation had been ‘approved at the [Combined Joint Special Operations Task Force] level with the RC-S [Regional Command–South] consent’ (p. 1189). As Carter’s objections made clear, however, ‘consent’ need not imply ‘consultation’, and RC-S clearly had to work hard to keep up to speed with Special Forces operations; conversely, Petit’s deputy testified that his battle captain usually attended the morning briefing at RC-S though he added ‘that is not required’ (p. 1012).

[62] See also Col Robert Johnson, ‘Command and control of Special Operations Forces in Afghanistan’, US Naval War College, October 2009.

[63] There was in any case friction between the Dutch and US Special Forces over the local alliances each favoured and fostered: see Bette Dam, ‘The story of “M”: US-Dutch shouting matches in Uruzgan’, Afghan Analysts Network, 10 June 2010. For discussions of Dutch counterinsurgency in Uruzgan, see Martine van Bijlert, ‘The battle for Afghanistan – militancy and conflict in Zabul and Uruzgan’, New America Foundation, 2010; George Dimitriou and Beatrice de Graaf, ‘The Dutch COIN approach: three years in Uruzgan’, 2006-2009’, Small wars and insurgencies21 (2010) 429-58; Paul Fishstein, ‘Winning hearts and minds in Uruzgan province’, Feinstein International Center, Tufts University, 2012; Christ Klep, Uruzgan: Nederlandse militairen op missie 2005-2010 (Amsterdam: Boom, 2011).

[64] He added that they were ‘not habitually assigned’ to his battalion (which was from the 1st Special Forces Group based at Fort Lewis near Tacoma, Washington): they were ‘a Fort Bragg company’ from the 3rd Special Forces Group. Fort Bragg (North Carolina) is also the home of Joint Special Operations Command (JSOC), but ODA 3124 was part of the ‘white’ Special Forces rather than the ‘black’ Special Forces of JSOC (see note 48), and Petit’s reference to its ‘full-spectrum understanding’ presumably referred to its kinetic and non-kinetic, humanitarian operations. Even so, trouble followed ODA 3124 when it was deployed to Wardak province in 2012, where it was accused of torturing and killing civilians. The only person convicted was its Afghan interpreter, but the criminal investigation remains open: Mathieu Aikins, ‘The A-Team killings’, Rolling Stone, 6 November 2013; ‘Where the bodies are buried: mapping allegations of war crimes in Afghanistan’, at http://wardakinvestigation.com/report/30 (June 2016).

[65] As soon as the ODA was outside the wire primary support passed to its battalion headquarters (SOTF-South at Kandahar Air Field). Its company headquarters (the ODB) at FOB Ripley in Tarin Kowt was ‘left out of the [communication] link once they leave the gate’ (p. 739); the ODB listened to radio communications and did their best to monitor mIRC, but access was selective at the best of times and the electricity supply was often intermittent (p. 742). That morning they also had computer problems so that ‘we weren’t seeing that whole picture’ (p. 746).

[66] Night raids, usually conducted by Special Forces, were the dual to targeted killings in the US kill/capture strategy in Afghanistan: Anand Gopal, ‘Terror comes at night to Afghanistan’, Asia Times, 30 January 2010; Erica Gaston, ‘Night raids: For Afghan civilians, the costs may outweigh the benefits’, Huffington Post, 19 September 2011.

[67] The MH-47s were variants of standard CH-47 Chinook helicopters modified for US Air Force Special Operations Command: hence ‘the birds that we fly only [operate] between the hours of EENT [end evening nautical twilight] and BMNT [begin morning nautical twilight].’ This applied only to the MH-47s; the CH-47s were typically used for other, non-covert missions.

[68] The AC-130 was commonly tasked to support Special Forces operations. It had a formidable arsenal: a Gatling gun, capable of firing 1,800 25 mm rounds per minute; a 40 mm Bofors gun; and a 105 mm howitzer. Its Battle Management Center included a sensor suite (with TV sensors, infra-red and radar) and a communications suite, but their inputs were limited. The resolution level of the sensors did not permit the identification of weapons, for example, and the linguist testified that s/he ‘didn’t really pass along anything much’ (p. 1145). That said, the Fires Officer at SOTF-South claimed that ‘when you mix the movement’ reported from the aircraft’, the ICOM ‘and what the Direct Support Officer was monitoring and reporting, this was interesting’ (p. 720).

[69] Afghanistan had 12 million cellphone users (out of a total population of 29 million) and the Taliban frequently used cellphones to pass information and to direct the insurgency.

[70] The NSA had been operating in Afghanistan since at least July 2008, but by 2010 it was transitioning towards a much larger footprint with the construction of a new data centre for its Real Time Regional Gateway (RT-RG) at Bagram Air Field. NSA’s representative at CENTCOM claimed that the RT-RG ‘helped us to become experts in providing the “where” part of a conversation’: Henrik Moltke, ‘Mission creep’, The Intercept, 29 May 2019. The RT-RG could be accessed from multiple locations, and a report in June 2011 required 15 pages to describe a single day’s monitoring at the NSA station at Kandahar Air Field: Scott Shane, ‘No morsel too miniscule for all-consuming NSA’, New York Times, 2 November 2013.

[71] These observations (at 0757 and 0833) are wholly redacted from the LA Times transcript but appear in the communications transcript contained in the consolidated file (pp. 1959, 1963). Chatty Kathy was a pull-string ‘talking doll’ from the 1960s (sic).

[72] The SALT reports from the GFC were transmitted by radio to SOTF-South and transcribed (p. 948); the language is not the Taliban’s, of course, who would not refer to ‘coalition forces’. The report was based on ICOM and inferences about the location of the transmissions, but it also reinforced the direct reports of headlights assumed to be flashing not simply from but betweenthe north and the south.

[73] ‘Positive identification’ (PID) turned out to be one of the most frequently used and least understood terms throughout that long night. It is correctly defined as ‘the reasonable certainty that a functionally and geospatially defined object of attack is a legitimate military target in accordance with the law of war and the applicable ROE’: Close Air Support, p. III.38.

[74] The reference to the ROE was redacted from the communications transcript but appears in an e-mail from the commanding officer of 15th Reconnaissance Squadron at Creech Air Force Base, dated 6 March 2010 (p. 890). The ROE themselves remain classified, but 421 referred to ‘hostile intent’ and 422 to a ‘hostile act’; these were standing ROEs that required no prior authorization for US forces to exercise the right to self-defence. The situation was different for other coalition forces; most European states do not consider hostile intent or hostile act sufficient to trigger self-defence, which is viewed as a response to an immediate, ongoing attack. In contrast, the US treats self-defence as a response to an imminent (not immediate) hostile intent or hostile act. See Erica [E.L.] Gaston, When looks could kill: emerging state practice on self-defence and hostile intent (Global Public Policy Institute, 2017) pp. 18-21; Camilla Guldahl Cooper, NATO Rules of Engagement: on ROE, self-defence and the use of force during armed conflict(Leiden: Brill, 2020).

[75] As I will show, this was highly problematic, part of what de Volo calls (with specific reference to the Uruzgan attack) a ‘high-tech patriarchal imperialism’ in which ‘brown women and children need protection and brown men need killing’: Lorraine Bayard de Volo, ‘Unmanned? Gender recalibrations and the rise of drone warfare’, Politics & Gender12 (2016) 50-77: 70.

[76] This message is redacted from the communications transcript but appears in an e-mail from the commanding officer of 15thReconnaissance Squadron dated 6 March 2010 (p. 896).

[77] Gaston, When looks could kill, p. 50-1.

[78] Hence Petit insisted the question revolved around distinguishing ‘what civilians are innocent versus bad’ (p. 1105). In response to a question about the presence of ‘possible civilians’ he rephrased it: ‘Everyone in that convoy, whether women, children or males with an AK [47], are they a threat to us or are they innocent non-combatant civilians?’ He and his staff regularly discussed the meaning of ‘civilian’, he said, and it was confusing: ‘We kill civilians all the time, called Taliban, and they are armed and shooting at us.’ What mattered, in his view, was whether those in the vehicles were non-combatants (p. 1113). More generally see Derek Gregory, ‘The death of the civilian?’ Society & Space24 (2006) 633-38 on Alan Dershowitz’s demand that civilian casualties be ‘recalibrated’ to show how many of them‘fall closer to the line of complicity and how many fall closer to the line of innocence.’

[79] I am indebted to Christiane Wilke for my phrasing and for helpful discussions on this issue. As she writes, ‘it is not clear what Afghans should do or avoid in order to be recognized as civilians’: Wilke, ‘Seeing and unmaking civilians’, p. 1056. More generally, she told me: ‘I’m … really disturbed by the ways in which the burden of making oneself legible to the eyes in the sky is distributed: we don’t have to do any of that here, but the people to whom we’re bringing the war have to perform civilian-ness without fail’ (pers. comm., 23 January 2017).

[80] FMV image quality is measured on the Video-National Imagery Interpretability Rating Scale; the Predator’s Multi-Spectral Targeting System-A had a rating of 6 which, combined with a transmission speed of 1-3 Mbps, was sufficient to track the movement of cars and trucks and to detect the presence of an individual apart from a group; but it could not isolate and track an individual in a group nor identify their gender. The MTS-B developed for the MQ-9 Reaper allowed for dramatically enhanced resolution and coverage. See Pratap Chatterjee and Christian Stork, Drone Inc: marketing the illusion of precision killing (San Francisco: CorpWatch, 2017) pp. 11-12, 25-6.

[81] By the time the Safety Observer entered the Ground Control Station, shortly before the engagement, the Predator crew had made multiple assessments about the passengers; even if he meant to say that the crew deferred to the screeners in passing information this was also demonstrably inaccurate, as I show below.

[82] Lisa Parks, ‘Drones, infrared imagery and body heat’, International journal of communication8 (2014) 2518-21:2519; Col Andrew Milani, ‘Pitfalls of technology: a case study of the battle on Takhur Ghar Mountain, Afghanistan’ (US Army War College, 2003) p. 25; Cockburn, Kill-Chain, pp. 125-6.

[83] The reconstruction of the strike in National Bird is misleading in this (I think crucial) respect: it consistently shows a remarkably clear image of all three vehicles.

[84] The ROVER system was originally developed in 2001 to provide a video link from Predators to AC-130 gunships, but the possibility – and importance – of enhancing the system to allow ground troops to receive the FMV feed was first proposed by a Special Forces Warrant Officer serving in Afghanistan: ’If only there were some way for me to see what the Predator is seeing…’ He took his idea to the Big Safari team at Wright-Patterson Air Force Base, and a prototype was delivered to the 3rdSpecial Forces Group in 2002. Ironically the original system was so bulky it hadto be carried in a Humvee – though by 2010 the system (by then, ROVER 5) had become much smaller and more portable. See Rebecca Grant, ‘The ROVER’, Air Force Magazine 96 (8) (2013) 38-42; Bill Grimes, The history of BIG SAFARI (Bloomington, IND: Archway, 2014) pp. 336-7.

[85] At 0602 the JTAC asked the Predator crew for a ROVER frequency and band, and a few minutes later asked them to push the video feed ‘to Cobra [Tinsley] base, that’s probably [going to] serve us best’; 30 minutes later the link to the JTAC at FB Tinsley was established though the quality of the feed was poor. The Predator crew raised no questions about the request, but at 0817 – half an hour before the attack on the vehicles – the sensor operator was puzzled why the JTAC ‘asked us if they were moving at that one point when they were stopped forever’ and – given the repeated problems with their own radio communication with the JTAC – concluded that perhaps ‘he’s got an intermittent ROVER feed.’ The pilot was left ‘wondering if he’s not sitting with the guys actually watching.’ The answer to all these puzzles was, of course, that the JTAC had no ROVER.

[86] Nasser Hussain, ‘The sound of terror: phenomenology of a drone strike’, Boston Review, 16 October 2013.

[87] Those who operate remote platforms consistently claim that they are not thousands of miles from the battlespace but just 18 inches away: the distance from eye to screen. Here is Col Peter Gersten, the commander of the 432nd Air Expeditionary Wing: ‘There’s no detachment… Those employing the system are very involved at a personal level incombat. You hear the AK-47 going off, the intensity of the voice on the radio calling for help. You’re looking at him, 18 inches away from him, trying everything in your capability to get that person out of trouble’: Megan McCloskey, ‘The war room: Daily transition between battle, home takes a toll on drone operators’, Stars & Stripes, 27 October 2009.

[88] See Derek Gregory, ‘The territory of the screen’, Mediatropes 6 (2) (2016) 126-147: 146-7.

[89] He elaborated the burden of this trust relationship for the GFC: ‘If he [fails] to act, it’s his guys get killed, if he acts and the information is wrong then he has to suffer the consequences of [civilian casualties] as a result of receiving bad information.’

[90] The Predator pilot’s radio communications with the JTAC improved when the aircraft was in its southern orbit towards Khod but became weak again whenever it circled back, prompting the sensor operator to exclaim at 0456: ‘We need to get this JTAC in mIRC’: but without the ROVER laptop or a data-enabled satellite phone the JTAC would have had no access to that either.

[91] The USAF investigation made much of ‘the extensive Intercepted Communications (ICOM) chatter correlated with FMV and observed ground movement’ (Commander-directed operational assessment, p. 1). ‘One of the most compelling elements of all the testimony is the recurring reference to ICOM chatter and how it seemed to correlate with observed movements,’ Otto wrote (artfully dropping the direct reference to the video feed), and he concluded that ‘the unusually strong correlation perceived by the GFC’ between the two was the ‘strongest element in his decision to engage’ the vehicles (p. 36). But Gaston, When looks could kill, p. 53 suggests that the very existence of ICOM (and signals intelligence more generally) could convert what would otherwise have remained indeterminate into a black-and-white calculus: ‘Troops with less access to intelligence and eavesdropping resources to verify threats may have been less willing to make a hostile intent determination in ambiguous situations.’

[92] The MC asked SOTF-South to arrange permission to stay, and the airman monitoring the FMV feed there – the Intelligence Tactical Coordinator – explained that ‘it looked very nefarious’ and ‘we assessed this to be a bad situation’ (p. 1374). Permission had to be obtained from the Intelligence, Surveillance and Reconnaissance Cell (ISARC) which co-ordinated all ISR assets; it is not clear whether this was based in Afghanistan or whether it was located at CENTCOM’s Combined Air Operations Center in Qatar. Permission finally came through at 0528.

[93] Again the basis for describing their actions as ‘tactical movement’ was never given; the screeners described the movement as ‘adult males, standing and sitting’ (pp. 21-2).

[94] Although the AC-130 has an extended range its loiter time over a target is around 4 ½ hours, whereas a Predator could remain in the air for 24 hours while the crew in the Ground Control Station rotated through two or three shifts. From the pilot’s message at 0756 the Predator would have been able to remain on station (referred to as ‘playtime’) until 1600.

[95] Had the AC-130 done so, the proximity to the compounds would presumably have violated the Tactical Directive.

[96] The Mission Operations Commander of the screeners at Hurlburt Field said much the same: ‘It’s Afghanistan, there’s going to be weapons down there in any large group of men’ (p. 592).

[97] One of the sergeants with ODA 3142 offered another, wholly innocuous reading that said much about the quality of the video feed and the cultural distance that confounded its interpretation: ‘‘After looking at the video afterwards, someone was saying when the vehicles stopped … there might be people pulling security. When I looked at [the] video they also could have been taking a piss. Whoever was viewing the video real-time … needs to be someone that knows the culture of the people’ (p. 501).

[98] The Joint Prioritised Effects List (JPEL) is a list of named individuals implicated in Taliban and/or al-Qaeda operations in Afghanistan and Pakistan pre-authorised for ‘kill or capture’ by coalition forces; Joint Special Operations Command was instrumental in its compilation, which also involved intelligence agencies from the US and NATO partners, and Special Operations forces took the lead in its execution. See Nick Davies, ‘Afghanistan war logs: Task Force 373 – special forces hunting top Taliban’, Guardian, 25 July 2010; Britain’s Kill List (Reprieve, 2012); Jacob Appelbaum, Matthias Gebauer, Susanne Koelbl, Laura Poitras, Gordon Repinski, Marcel Rosenbach and Holger Stark,‘A dubious history of targeted killing in Afghanistan’, Spiegel Online, 28 December 2014.

[99] McHale accepted ‘by a preponderance of the evidence’ that the GFC ‘engaged primarily based on a belief that the vehicles represented an imminent threat to the forces under his command’ (p. 28). But the hare continued to run: when Col Gus Benton, the commander of CJSOTF-A, was alerted to the developing situation shortly before the strike he said he assumed that what he saw on the Predator feed was ‘this JPEL [target] moving along this road’ (p. 530) (see below, pp. 00-00).

[100] The search for weapons continued after the strike because their presence amongst the wreckage would have helped to validate the attack. Even had it been successful – it wasn’t – it would not have been enough; McHale’s report emphasized that ‘positive identification of weapons is neither required nor sufficient for PID’ (p. 33).