It’s a great pleasure to announce a new journal edited by Andrew Hoskins and William Merrin (full disclosure: I’m on the Editorial Board), Digital War, published by Palgrave Macmillan (now part of Springer Nature):

There is no longer war, there is only digital war.

‘Digital War is understood as the ways in which digital technologies and media are transforming how wars are fought, experienced, lived, represented, reported, known, conceptualised, remembered and forgotten.

‘Digital War identifies, not a new form of war, but an entire, emergent research field. We provide a vital and dynamic forum for addressing cutting-edge developments, responding rapidly to new wars and moreover, agenda-setting in the digital environment.

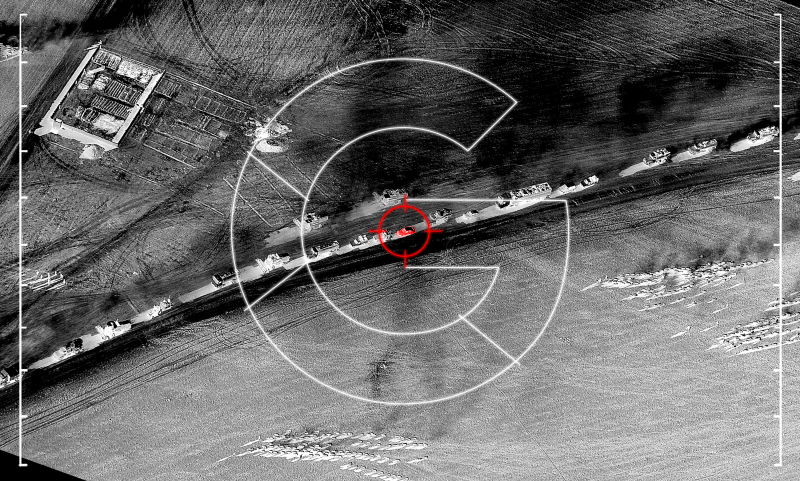

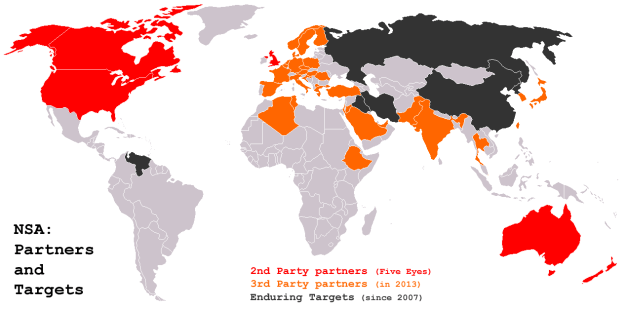

‘In recent years, Wikileaks brought us a new vision of our ongoing wars; lethal, autonomous robotics began to be publicly debated and a growing awareness of the revolutionary impact of social media in conflict-zones spread. Topics such as hacking, hacktivism, digital civil-wars and government surveillance came to the fore; the success of Islamic State meant everyone was discussing online terrorism and propaganda; wars across the world play out now on social media platforms and people’s smartphones with participation from increasingly indistinct militaries, citizens, states, and new developments in military A.I., simulation, augmentation and weaponry made the news. Soon, everyone became conversant with the subject of cyberwar and nation-state and hacking group cyberattacks, and discussions of 4chan, 8chan, trolling, the weaponization of Facebook, Twitter-bots, Troll-Farms, and Russian information war became common.

‘This journal thus sees digital war as having gone mainstream.

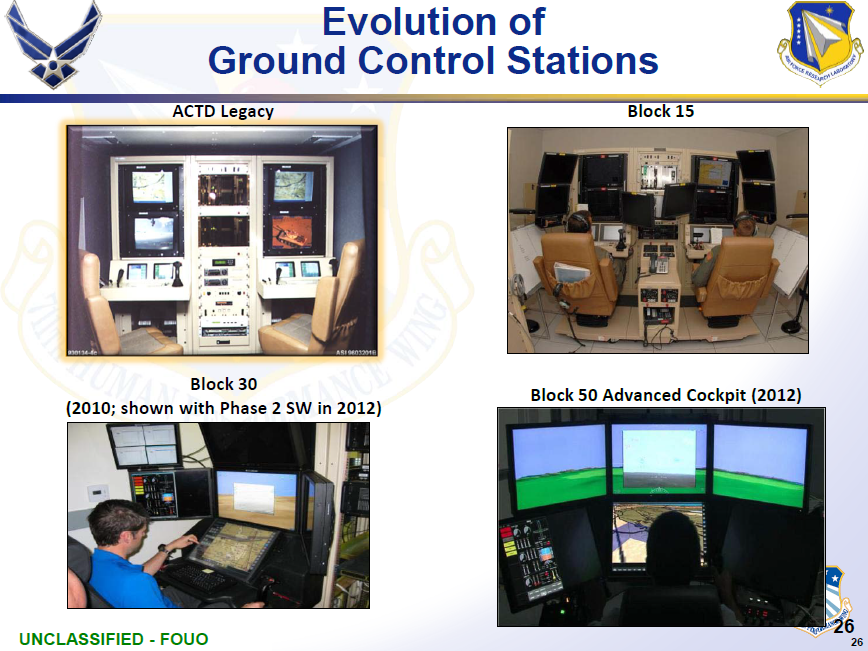

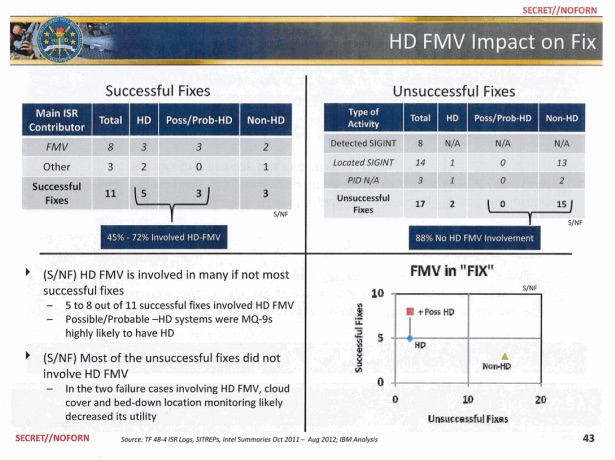

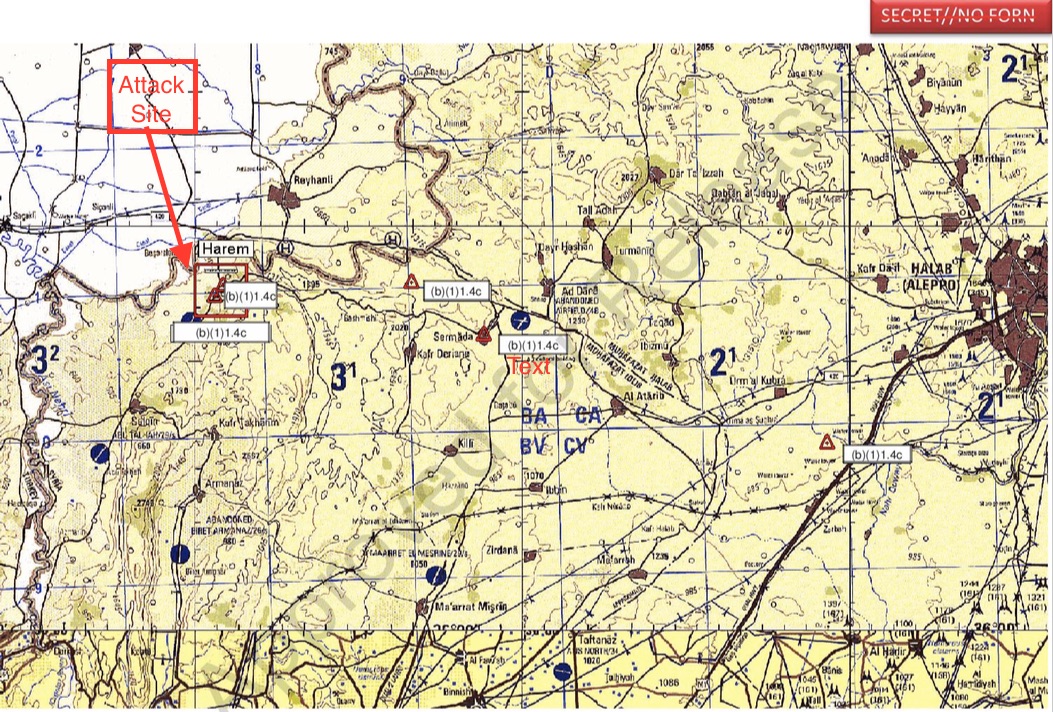

‘This journal meets a need for a scholarly and practitioner forum on a nascent yet already dominant field. The political, social and cultural importance of digital war have increased dramatically, with topics such as drones and cyberwar becoming key contemporary issues, whilst the rise of social media has revolutionized societal communication, impacting on how wars are fought and known and experienced, as seen, for example, in Gaza (2014), Syria (2011-present) and Ukraine (2014). New developments in information war, such as the in/visible Russian campaign against the US and Europe, threaten western electoral processes as well as broader social cohesion, whilst hacking groups of uncertain affiliation continually attack governments, companies and organisations with cyberattacks seeking to damage systems, or exfiltrate sensitive political, economic or military information. New technological developments in simulations, wearable technologies and human augmentation have direct military applications whilst we are already seeing a gradual automatization of weapons systems with the increasing application of A.I. in the military (such as in pilotless drones) and investment in robots in the US, Russia and China etc.

‘War’ itself is being transformed today as the traditional legal definitions that have governed its declaration, identification and operation no longer fit the digital reality we see around us. The aim of this journal is to critically explore what war means today, its future trajectories and consequences.

‘Digital War is driven ultimately by quality of scholarship, but rather than being restricted to publishing exclusive and narrow academic work, we welcome a range of interventions and responses, including theoretical, polemical and speculative pieces from experts in their field. Our aim is not only to be the intellectual centre of debate around contemporary war but also for emerging technological developments and their implications for the future of and as the leading and radical forum for discussions about developments in conflict.’

You can access the inaugural issue here. And you can follow the blog here.

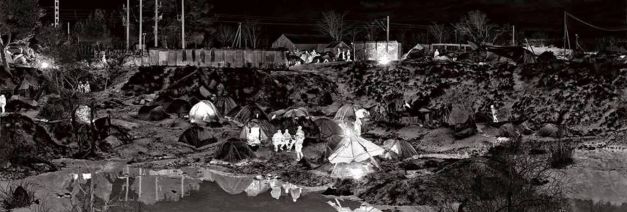

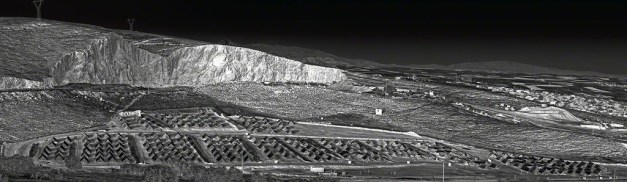

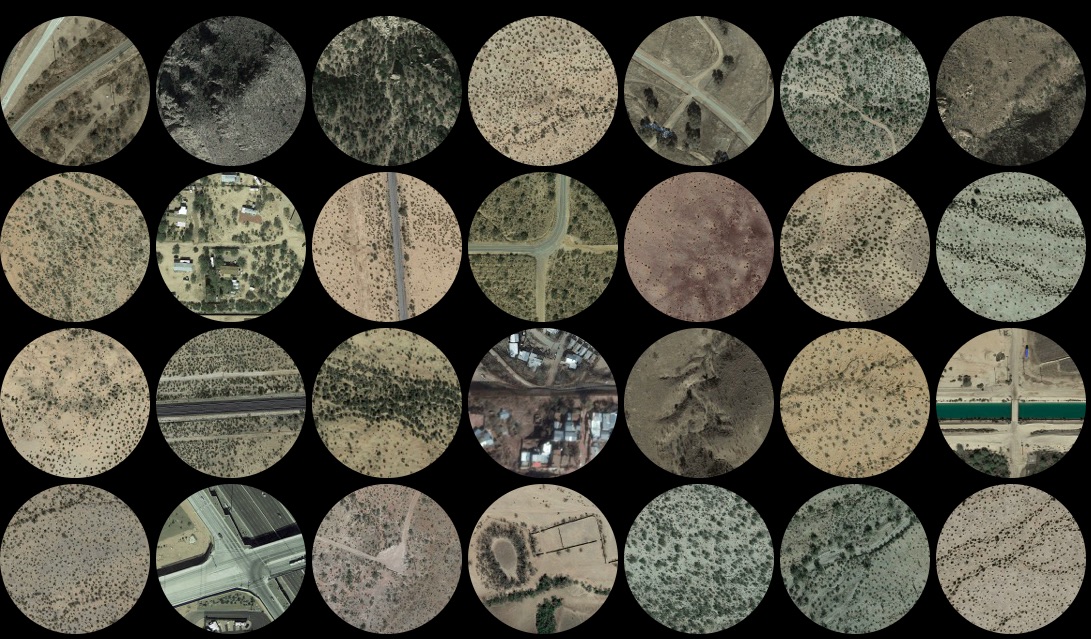

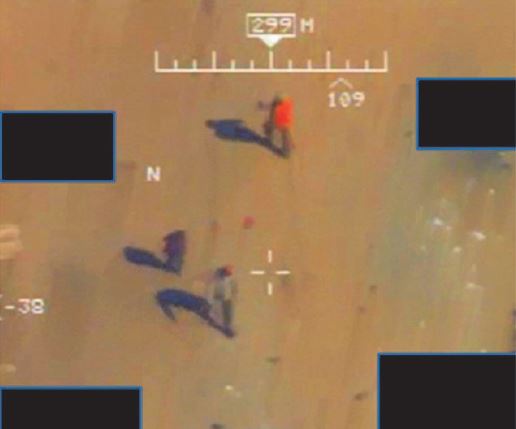

(The lead image is by Shona Illingworth, from her essay with Andrew in the first issue, ‘Inaccessible war: media, memory, trauma and the blueprint’.)